The Internet of Things (IoT) and Big Data are two phrases that are vaguer than 5G. So this text will be significantly less technical in its explanations.

These two phenomena have one thing in common: they appeared merely because they became possible, and nothing more. That is, some technologies turned out to be cheap enough so that IoT and Big Data could be “played” without investing significant funds (that is, without a lot of risk).

The problem with IoT is that it is generally a marketing term that has vague technical content. This allows you to attribute almost anything you want, and as a result, any analysis is very difficult. Let’s start from the beginning – the name IoT itself contains the word internet. What is the internet? It is a “network of networks”, an association of independent networks in which each node can communicate with any other, which is provided by relevant protocols.

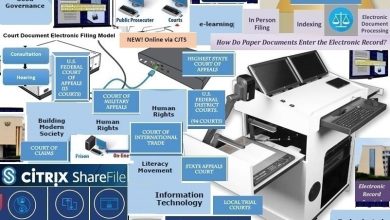

IoT most often refers to any digital interaction of devices in which a person does not participate explicitly or which is not initiated by a person. In this sense, IoT currently uses quite a lot of different protocols, many of which are local. That is, they cannot be used in a “network of networks” where there are segments independent from each other. Therefore, it is probably often more correct to talk about machine-machine interactions and process automation, of which this interaction is often a part. This process is not new – it started back in the 18th century, and became the cause of the first industrial revolution. It continues, with the use of all new technological capabilities that appear in the process of technology development and, of course, affects not only factories, but all spheres of life.

Why do we need different protocols? The fact is that device developers can have different technical requirements. In one case – the protocol should be optimized in terms of energy consumption (for example, if we are talking about a huge number of autonomously working sensors – here, simply replacing the batteries becomes an expensive process), in the other – from the point of view of universality of connection to a data transmission network, in the third – in terms of matching user habits (the list of criteria, of course, is not complete).

An example of a typical local installation is the industrial Internet of Things, IIoT. Actually, this is nothing but a new term for the same automation of production, which emphasizes the withdrawal of this automation to a new technological level. It has fundamental differences from domestic IoT, in particular: specialization (far from arbitrary elements, but only those related to a specific production process), localization (the system can be geographically “stretched” if we are talking about, say, the pipeline, but all the same, everything is localized on it), standardization (at home we can have a bunch of different devices working according to different principles, and not knowing about each other, everything should be linked into a single mechanism in production). The development of IIoT solutions, as well as automation systems preceding it, is determined by purely economic factors.

However, on the whole, far from all of the machine-machine interactions are local. Some are implemented directly using internet protocols (and so it is supposed, for example, to implement IoT scripts in 5G). Some suggest a tier structure: for local interactions, a special protocol (or a set of them) is used, but the central gateways of local installations interact with each other and/or with management platforms via the regular global internet using its standard protocols. An additional complication may be that for communication via the internet (directly, or through central gateways, as described above), each node must have a unique IP address. For the older (and, therefore, more popular) version of the protocol – IPv4 – there is now a shortage of addresses, which creates additional restrictions for IoT architectures. In the newer version, IPv6, addresses are available, but so far not all telecom operators in the world have deployed its support on their equipment – and in Russia, its implementation has just begun quite recently, and it was literally implemented in units.

At the same time, many IoT developers focus on the hardware, and choose some ready-made software modules for their product, which, from their point of view, are best suited for their purposes – including the modules responsible for work with the network. This approach poses additional challenges – in particular, well-known cybersecurity issues. The specified modules are regularly written inaccurately, they find vulnerabilities, and products based on them can be used both for unauthorized access to the internal networks of the enterprise (a well-known precedent is penetration into the secure casino network via the IoT aquarium control device), and for organizing attacks on other resources (the most famous example is a huge DDoS attack on the website of the journalist Brian Krebs from a botnet from IoT devices: surveillance cameras, smart video players, etc.). At the same time, for the same reasons, a significant proportion of IoT devices do not support firmware changes, have the same service names and passwords – in general, it demonstrates all those “growth problems” that are so well known to security experts. This, it would seem, particular aspect shows that in general the IoT world is still in the initial stage of development, when not enough experience has been accumulated – it has yet to grow up.

This is also evident in consumer aspects. Some time ago, I was wondering why the modules for pairing with external devices do not add decisively everywhere, because, at the present time, it costs nothing. Now, many devices (apparently, precisely for this reason) have received such interface modules, but the creators of these devices have not yet figured out what functions (and especially new functions!) should be implemented through this interface. And, by and large, it is the lack of ideas that is the limiting factor in the development of IoT: you need to offer something to the market that users are willing to invest in. And, preferably, not once, but regularly: the initial purchase, support, purchase of a newer product, and so on in a cycle. There is a certain crisis with ideas of this kind. At the same time, this means, in my opinion, only that until there is an ultrafast, explosive growth of the IoT world, normal organic growth will be observed for some time. Everyone will get used to the fact that household appliances can communicate via a smartphone, for example. And sooner or later, the quantity will turn into some kind of quality, which is now difficult to predict.

Big Data: there used to be a joke about this phrase that it is when the data does not fit into the MS Excel table. This is not far from the truth: the sources of large amounts of data were around, but not always with massively accessible ability to store and process them. In recent years, when the cost of storing data has become very low, and well-working algorithms for their processing have appeared, the term Big Data has also appeared, which means mass-available technologies for processing large amounts of information.

In some applications (for example, internet search engines), the need to work with large amounts of information appeared a long time ago. After some time, many companies found that they can optimize their work if they have their own data that can be accumulated during the work. As a result, work in this direction quickly became very popular, and “Big Data” moved to the list of popular phrases.

However, it is worth noting that, although simple optimization based on Big Data analysis is achieved, as a rule, rather quickly, further steps in this direction are significantly complicated. Just because the data processing algorithms alone do not create new meanings and cannot formulate a question – with their help you can only get the answer to the question that a person can provide. And, as you know, to ask the right question, you need to know half the answer. An example may be the simplest approach – the search for a variety of correlations among different data sets: the revealed correlation makes little sense as long as there is no model to explain it. The correlation may be random (a well-known example – in the 2000s, the rate of divorce in Maine correlated very well with the level of margarine consumption in the USA per capita); the correlation may not indicate a direct relationship (a textbook example – the number of drowned people by months correlates well with the level of ice cream sales; simply because in the winter people for the most part do not bathe and do not buy ice cream). Accordingly, in the absence of a qualitative model, the decisions taken can even worsen the economic situation (in the examples given, no matter how much money we spent on combating the consumption of margarine and ice cream, neither the level of divorce in Maine, nor the number of drowned people will change).

That is, Big Data needs specialists who will be able to handle this Big Data – data analytics. The specialty is new, but now there are training courses that prepare them. In this sense, the expression “data is new oil” is very accurate, because crude oil is also not very suitable for anything, it must be processed somehow to obtain valuable materials and products. The amount of data available is also growing, and IoT in all its manifestations is expected to be their new powerful source. How the processing algorithms will develop, and what kind of science will arise on the basis of those developments in data analytics is unknown. It is only clear that progress in this direction will continue.

A certain danger is connected with this. The accumulated data arrays can give us information in the future, the nature of which is currently unknown to us. In particular, it may be some sensitive data about which we do not suspect that it is there at all (there have already been precedents of this kind: there is a curious case when, based on Big Data analysis, the Target supermarket accidentally revealed a hidden pregnancy of a teenage girl by her innocent shopping). Thus, the question arises – if it is not known that the analysis of Big Data in the future will be able to “get” out of the existing data array, how should this data be protected? And it is clear that if we proceed from the worst-case scenario, namely, that any sufficiently large data set may contain, for example, state secrets, then it is necessary to minimize all IoT installations, because the systems for transmitting and storing information in most of them completely do not meet the requirements for systems working with the living room. Although this is a somewhat (but slightly) exaggerated example, it shows that a sound balance is needed between different requirements and regulations, on the one hand, and technological development, on the other.